Or I’m No Fool with Weaponized AI

Okay, I admit the title is clickbait to get you to read this article. There are articles about ChatGPT and its impact on civilization written daily, so I do not need to add to that body of minimal value. But weaponized artificial intelligence (AI) is a real-world problem and something that you need to be aware of.

If you saw my interview with Gary S. Miliefsky, the esteemed publisher of Cyber Defense Magazine, on Cyber Defense TV, I talked about how advanced persistent threats (APTs) have weaponized AI by using it to check the environment the malware is running in to determine if the environment is conducive for attack. This is not theory or TV science fiction; this is real and has been happening for a while.

Weaponizing AI

Previously, the typical level of intelligence that malware had was to watch the system clock and activate the payload on a certain date and time. Next came detecting if the malware was running in a virtual sandbox. The AI could detect if specific hardware was available, and the malware would shut down if it was not. Threat actors have since developed AI modules that evaluate specific environmental conditions to determine if malware should activate. Environmental factors include the domain the system belongs to, user accounts on the system, determining if it is being run in a virtual sandbox, what security software is running, and if it is possible to disable it.

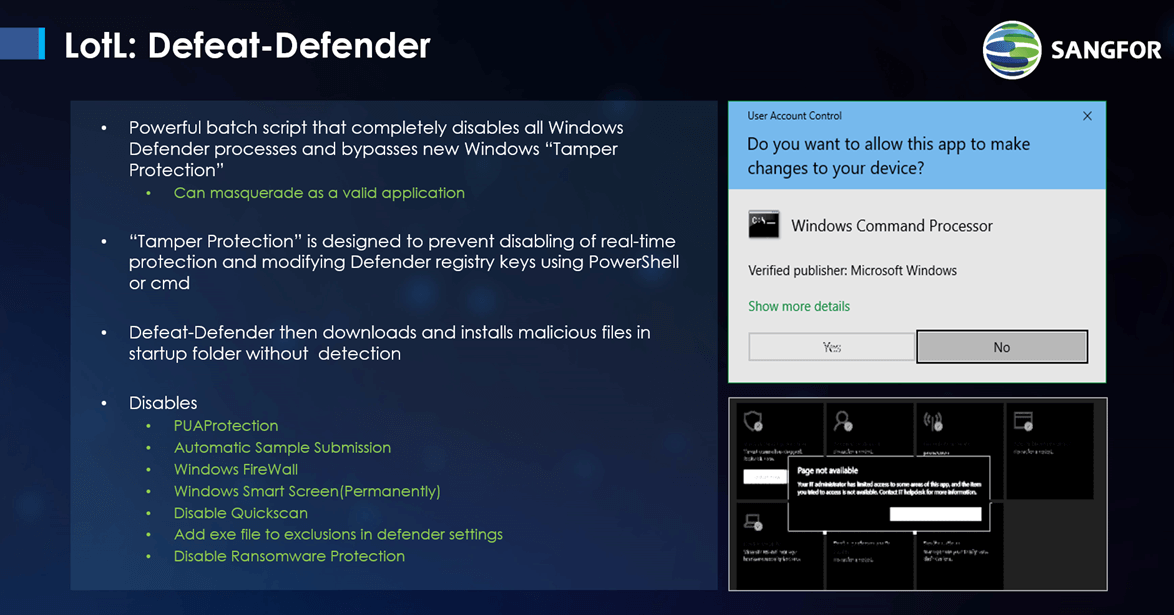

That last check is very insidious as APTs can disable security software like Windows Defender. There exists in some APTs a powerful batch script called Defeat-Defender, which can shut down Windows Defender in any Windows system, prevent it from restarting, and hide the fact that it has been disabled so that the administrator is unaware. The APT will then go to sleep for a period of time, say two weeks, and then wake up to check if Defender has been re-enabled. If Defender has not been restarted, then the malware will continue its check to determine if it should activate. If Defender has been reactivated, then the APT will go back to sleep, never to return.

ChatGPT and You

Figure 1 Defeat-Defender (source: Sangfor Technologies)

The best example of weaponized AI being leveraged is the infamous SolarWinds Supply Chain Attack. This attack targeted numerous organizations in the United States and Europe, including hi-tech companies, communications companies, banks, schools, and government departments.

In December 2020, both FireEye and Microsoft detected lateral movement attacks that were later found to be a global operation. The attacks, attributed to threat group APT29, implanted malicious code into a core SolarWinds DLL file and distributed backdoor software through SolarWinds’ official website. Using a technique called Living off the Land (LotL), the malicious DLL is called using the valid signed executable, SolarWinds.BusinessLayerHost.exe, and thus considered a trusted process. Trusted processes are not scanned by security software.

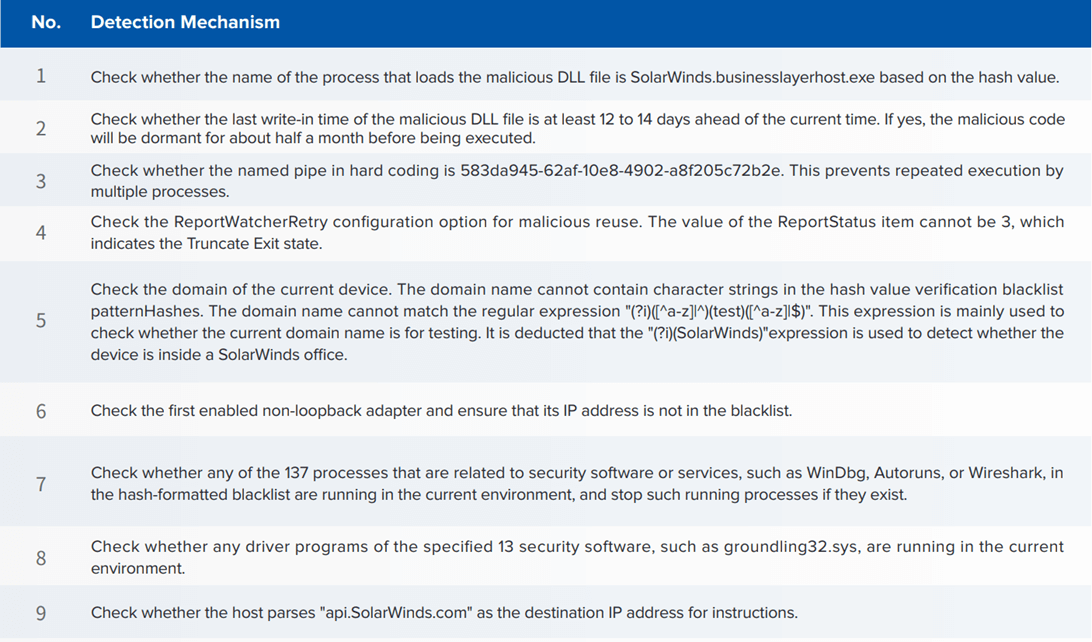

Once the malicious process was started, it began running a checklist of 9 environmental tests (see figure 2. SolarWinds Strict Environment Check) to see if it could activate undetected.

Figure 2 SolarWinds Strict Environment Check

Fighting AI with AI

The effects on businesses attacked with weaponized AI are significant and include:

- Ransomware Infection: Attackers use weaponized AI attacks to bypass security systems, build connections with their command & control (C&C) server, and automatically download ransomware executables.

- Data Breach: Attackers splice sensitive data and append as hosts to computer-generated domain names and send these as DNS requests to their servers. The hostnames are reassembled into exfiltrated data.

- Assets Under Attacker Control: Attackers control assets for illegal activity (Cryptomining/DDoS as a Service, etc.)

These cause disruption to business operations with great financial and operational impact.

AI-enabled malware can breach and infect an organization within 45 minutes. No human incident response team can detect and respond quickly enough. Organizations need tools with purpose-built AI models looking for specific behaviors. General-purpose AI models do not have the fidelity to detect different types of intermittent behavior over long periods of time. Behavioral detection should include building baselines of network traffic, user behavior, and application behavior. The models would then identify anomalous deviations, alert, and use SOAR (security orchestration, automation, and response) to command security products to respond using automated playbooks. This reduces the time needed to detect and respond to an attack from days and weeks to a matter of minutes.

Most organizations think that their security architecture is robust enough to combat APTs. Yet, ransomware is almost 100% successful, which means the most popular firewalls and endpoint protection are not sufficient to detect and block weaponized AI APTs, let alone go back and detect a breach. The next state-of-the-art security solutions must be AI enabled to detect the AI being used against them. The attackers may currently have the upper hand, but you can start evaluating new smarter tools to fight back.

Original article was published on the Cyber Defense Magazine.