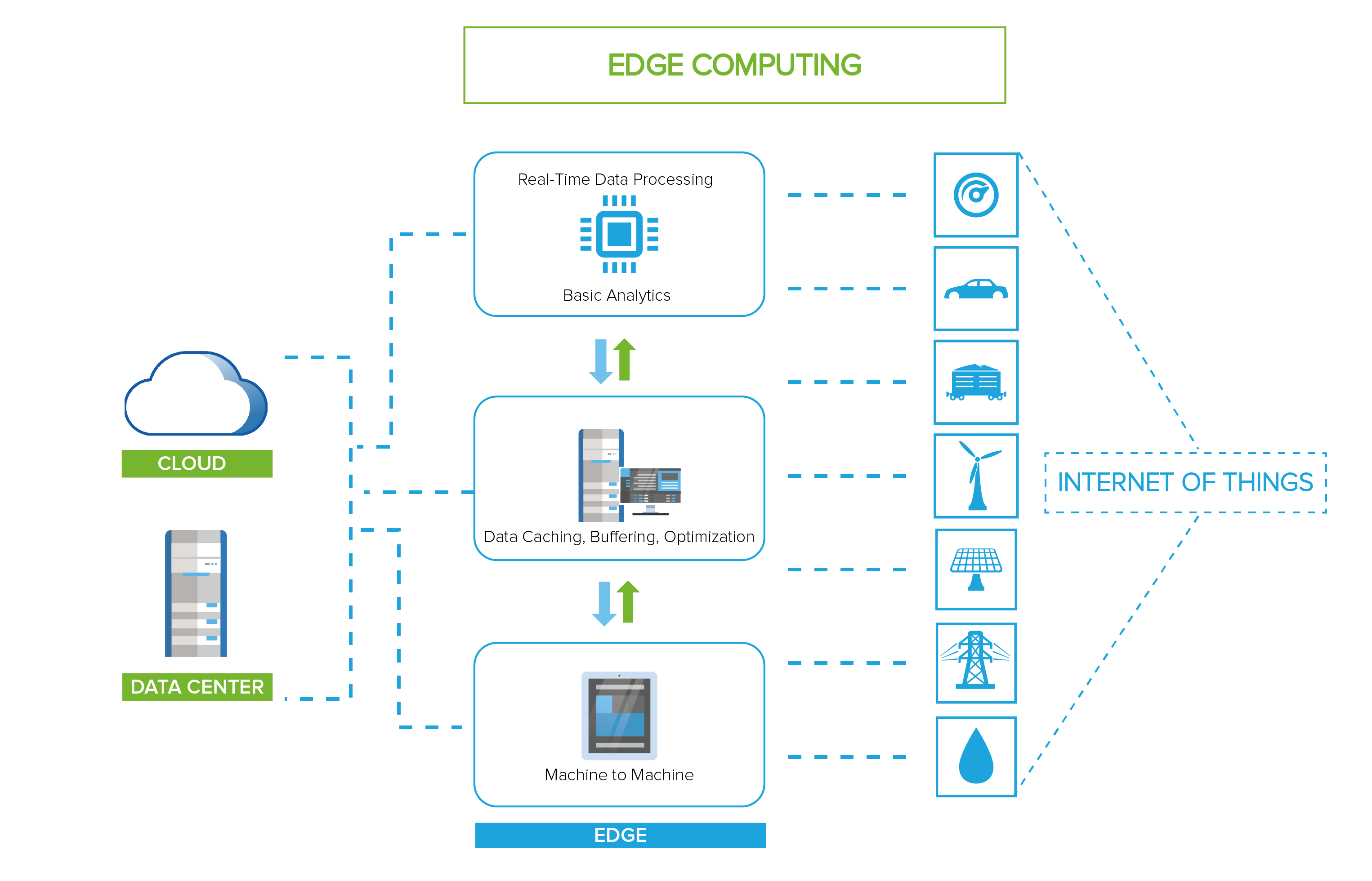

Processing data as closely as possible to its source is a theme that is captivating the IT world at the moment. A great deal of the workload and processing runs in virtual environment, and thus it's fair to ask if it makes sense to virtualize an Edge Server.

The exact meaning of edge computing and how it is implemented outside the data center is still up for debate. Some think of edge computing in terms of smart devices. Others have intermediate gateways that process customer data. Still others imagine micro data centers that meet the needs of remote workers or satellite offices.

Despite the disparity of perspectives, they all share a common feature; the data generated by the customer is processed on the periphery of the network, as close as possible to its source.

Edge computing is at the opposite end of a centralized data center. Administration of Edge Servers is done remotely, often using the Internet to connect while peripheral sites typically have space and power restrictions, making it difficult to add capacity to an existing system or significantly change the architecture. In some cases, a perimeter site may require specialized hardware or the need to connect to other remote sites.

Contact Us for Business Inquiry

Many factors push organizations to the limit, especially mobile computing and IoT, which generate huge amounts of data, data that mega data centers can no longer manage, resulting in an increase in data latency and network bottlenecks.

Emerging technologies are making edge computing more practical and even more cost-effective than traditional approaches, as they address the limits of the centralized model.

Edge Computing Server and Virtualization

To keep data processing on the Edge as efficiently as possible, some run serverless containers or architectures on bare metal to avoid hypervisor and VM overhead.

In some cases, this might be a good approach, but even in a peripheral environment, virtualization has the advantage of flexibility, security, maintenance, and resource utilization. Virtualization is likely to remain an important component in many boundary scenarios, at least for intermediate gateways or micro data centers. Even if applications run in containers, they can still be hosted on virtual machines.

Researchers see the VM as an essential component of edge computing, and believe administrators can use VM overlays to enable faster provisioning and move workloads between servers. But researchers aren't the only ones focusing on bringing virtualization to the limit.

Sangfor is committed to edge computing solutions that can virtualize and manage the entire infrastructure, by offering hyper-converged virtual storage-based infrastructure software. Sangfor aCloud software can be used at a remote site to support boundary scenarios through the aCMP (Cloud Management Platform) platform, providing a system to handle the management and monitoring of both local and remote sites.

Although edge computing doesn't necessarily involve virtualization, it doesn't rule it out, and in fact, often embraces it.

Centralized Management of Remote Sites

Along with edge computing, there are a number of challenges for administrators hoping to manage virtual environments. The lack of industry standards governing edge computing only increases complexity.

As IT resources move from the data center to the periphery of the network, asset and application management is becoming increasingly difficult, especially as much of it runs remotely. Administrators need find ways to deploy these systems, perform ongoing maintenance, monitor infrastructure and applications for performance issues, and address issues like fault tolerance and disaster recovery.

If an IT team manages a single peripheral environment, they should be able to handle it without much difficulty. But if the team has to manage multiple peripheral environments, and each performs different functions and is configured differently, the difficulties grow exponentially. For example, some systems may run virtual machines, while some may run containers, and some might even do both. Systems could also work on different hardware, use different APIs and protocols, and run different applications and services.

Administrators must be able to coordinate all of these environments, while allowing them to operate independently. Edge computing is an industry in its infancy, and network management capabilities have yet catch up.

But management is not the only challenge. An edge computing server often has resource constraints, which can make it difficult to change the physical structure or cope with fluctuating workloads. These challenges go beyond capabilities like VM migration.

In addition, administrators may face interoperability issues between source devices and edge systems, as well as between systems on multiple fronts. This is made even more difficult by the different configurations and lack of industry standards.

One of the biggest challenges administrators face, is ensuring that all sensitive data and privacy is protected. The distributed nature of Edge computing increases the number of attack vectors, which makes the entire network more vulnerable to attack, with different configurations increasing the risks.

For example, one system could run containers in one VM and the other on bare metal, resulting in a disparity in the methods IT uses to control security. Distributed systems can also make it more difficult to address compliance and regulatory issues. Finally, the risk of undetected intrusion is even greater given the difficulties of managing remote and distributed environments.

Why Sangfor?

Sangfor HCI enables unified management of distributed environments by centralizing workflow management, centralization of logs and alarms, VM management, backups and disaster recovery.

Sangfor Technologies is an APAC-based, global leading vendor of IT infrastructure solutions specializing in Network Security and Cloud Computing. Visit us at www.sangfor.com to learn more about Sangfor’s Security solutions, and let Sangfor make your IT simpler, more secure and valuable.