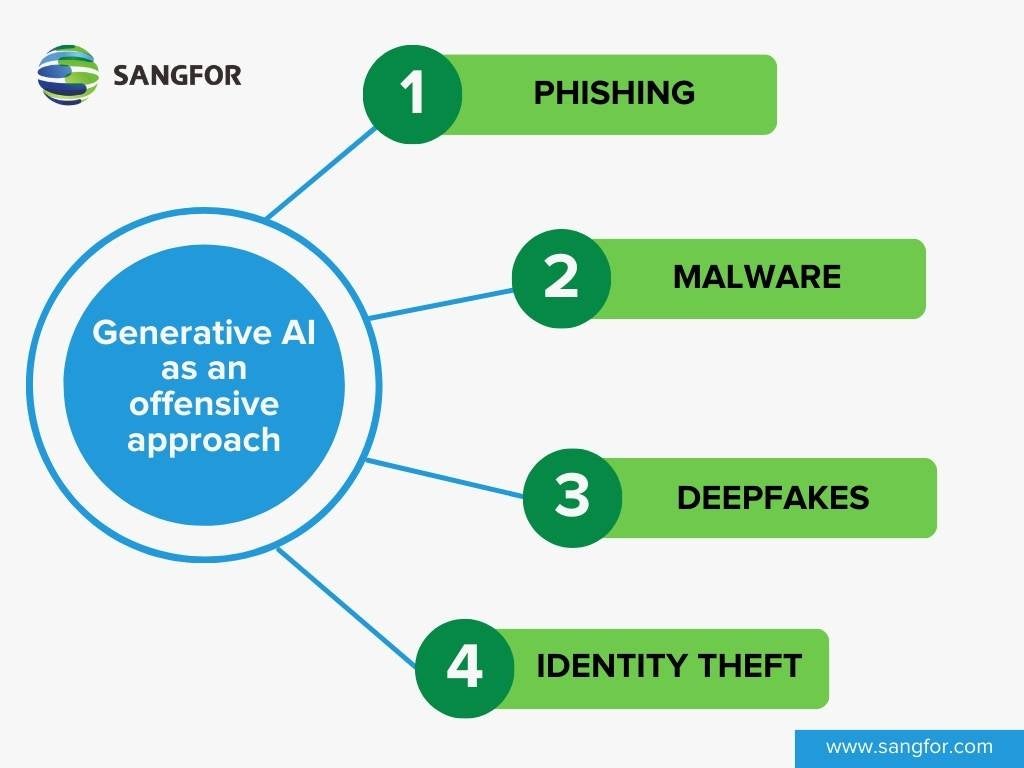

Generative AI is a branch of artificial intelligence that can create new data or content based on existing data or content. The emerging use of Generative AI is both – a boon and a bane. Since it learns by itself and generates content by itself, it can poses significant challenges in the field of Cybersecurity. It can be used by malicious actors to launch more sophisticated and convincing cyberattacks, such as phishing, malware, deepfakes, and identity theft.

As threats evolve in today’s world, the cybersecurity measures we use have to evolve as well. AI has been used to revolutionize cybersecurity for many years now. Most cybersecurity technology today has some element of AI involved. By 2025, the global AI in cybersecurity market is expected to reach US$ 38.2 billion. Cybersecurity relies on efficiency, accuracy, and speed – all of which are trademarks of AI-driven solutions. In this article, we will explore how generative AI can be used for both offensive and defensive purposes in cybersecurity, and what the best practices and strategies to mitigate the potential threats and leverage the benefits of this emerging technology.

Generative AI as an offensive approach in Cybersecurity

Generative AI leverages deep learning techniques and neural networks that replicate and create content which are almost identical as human content. It can also make mistakes and punctuation errors, so it looks exactly the same as a human content. The ever-evolving technique of such machine generated content makes behavior nearly indistinguishable. That’s why the malicious actors are using it for their own nefarious purposes. Some of such examples are:

- Phishing: Generative AI can be used to create highly realistic and convincing phishing emails, imitating legitimate organizations or individuals. Since it can read the HTML of a webpage and then it can generate fake login pages or websites that closely resemble authentic ones, tricking users into revealing sensitive information. The entire setup can deceive a human for an easy trap.

- Malware: The technology can develop a self-learning polymorphic malware code that constantly evolves, and changes based on the target system. After a failed attempt, the system can learn and improve its own malware code that can have an ability to break the vulnerabilities of a target system. So incase the enterprises have not updated their security software, the malware has the capability to attack on their vulnerability.

- Deepfakes: Generative AI can create sophisticated deepfake videos, audio or images, manipulating visual and audio content to deceive and mislead viewers. This can be used to impersonate a celebrity or a personality for reputational damage. It can be used for phishing and scamming purposes; or can be used for spreading false information.

- Identity theft: When users are deceived, they are likely to provide their personal details – including their passport details, bank account number, and other personal details to the systems. Using these information, Generative AI can be used for forged documents such as passports or driving license that make it indistinguishable from the real documents and can falsify your identity.

Generative AI as a defensive approach in Cybersecurity

These approaches are used by good people who are into Cybersecurity and developing defensive mechanisms. According to Forbes, 76% of enterprises have already prioritized AI and machine learning in their IT budgets. These are some of the ways that AI can be used in cybersecurity protocols:

- Quickly analyzing large amounts of data: generative AI can help security teams process and understand massive volumes of data from various sources, such as logs, alerts, network traffic, etc. This can help identify patterns, trends, and anomalies that may indicate potential threats or vulnerabilities.

- Detecting anomalies and vulnerabilities: generative AI can help security teams discover and prioritize the most critical and relevant risks in their systems, such as misconfigurations, outdated software, unauthorized access, etc. This can help prevent or mitigate cyberattacks before they cause damage.

- Automating repetitive processes: generative AI can help security teams automate and streamline various tasks that are tedious, time-consuming, or prone to human error, such as incident response, threat hunting, malware analysis, etc. This can help improve efficiency, accuracy, and productivity.

- Enhancing data privacy and security: generative AI can help security teams protect sensitive data and information from unauthorized access or leakage, by creating synthetic data that mimics the real data without revealing its identity or content. This can help reduce the risk of data breaches or misuse.

- Endpoint Security & Monitoring: The endpoints of a network are generally the most vulnerable parts. This is why most endpoint security solutions choose AI-powered technology to identify malware, trigger alerts, or create baselines for acceptable behavior. Generative AI can be used to create scripts and virtual scenarios that identifies network animalities, detect the vulnerabilities. Companies can make informed decision on improvising security at their premises.

AI can be used to combat the AI-generated attacks. Companies can take advantage of this technology by adopting generative AI tools and solutions that are tailored to their specific needs and challenges. They can also leverage the expertise and guidance of trusted partners and vendors who have experience and knowledge in implementing generative AI for cybersecurity.

Enterprise Best Practices to build awareness against Generative AI Risks

We must learn to live with AI and machine learning technologies. As we discussed earlier, it can become a bane or a boon for humans. That’s why there are enterprise-level best practices that can raise awareness amongst the employees and educate them about upcoming threats.

Employee Training and Education

Workers need to be properly educated and informed about the generative AI being used. Human error is one of the main reasons that cyber-attacks are so successful. Ensure that your team stays clued up and ready to respond. Train them to follow security measures and keep their systems updated with latest available versions.

- Cybersecurity Workshops: Conduct regular workshops and seminars that focus on the specific threats posed by generative AI, showcasing real-world examples and practical strategies for detection and mitigation. Samsung learned this the hard way when sensitive code was put into the ChatGPT by employees – leading to the company banning the use of the chatbot. Limiting access and creating sandboxes to isolate data might help to avoid this issue.

- Awareness Campaigns: October is celebrated as Cybersecurity Awareness Month CSAM every year. CISA launches awareness campaigns that keep employees informed about the evolving landscape of generative AI threats and best practices for safeguarding sensitive information. Sangfor also generates awareness for CSAM through various blogs and social media campaigns.

- Incident Response Training: Arrange a workshop by the professional incident response teams with specialized training to recognize and respond effectively to generative AI-driven cyberattacks. This will ensure quick and accurate incident resolution. After the workshop, you can establish clear incident reporting protocols that enable employees to promptly report any suspicious generative AI-related activities, encouraging a culture of accountability.

- Feedback Mechanisms: Establish channels for employees to provide feedback and share insights regarding generative AI risks, allowing for ongoing improvement of security strategies. You can create a warm and supportive environment so that employees are comfortable enough to report such incidents.

- Documentation and Policy Development: Implement clear and concise policies regarding the use of generative AI tools within the organization, outlining permissible applications and emphasizing ethical considerations. Maintain detailed documentation and log reports of generative AI-related incidents and responses, facilitating post-incident analysis and the development of proactive measures.

Can Generative AI Replace Cybersecurity Professionals?

While Artificial Intelligence has been given a fair hostile approach when it comes to replacing human intelligence, the AI tools used are still just that – tools. While they offer insight and advantages, cybersecurity still relies on the innovation and integrity of humans to succeed. AI in cybersecurity can only get so far without the help of its human counterparts. These tools and platforms empower and enable better cybersecurity technology and allow us to focus on other elements. So, no, AI in cybersecurity will not be able to replace cybersecurity professionals for a long time now. This human expertise is what drives AI to reach such incredible standards.

Sangfor Technologies provides premium solutions for your company that seamlessly collaborates with cybersecurity AI and use specific protocols to ensure that the best safety measures are maintained. The Sangfor Network Secure Next Generation Firewall (NGFW) is used to identify malicious files at both the network level and endpoints. The AI-powered and cloud-based Neural-X sandbox is also used for the isolation and critical inspection of suspicious files. Additionally, Sangfor’s advanced Endpoint Secure technology provides integrated protection against malware infections and APT breaches across your entire organization's network. It also makes use of an AI Detection Engine for improved security.

For more information on Sangfor’s cyber security and cloud computing solutions, visit www.sangfor.com.